Developing locally with ASP.NET Core under HTTPS, SSL, and Self-Signed Certs

Last week on Twitter @getify started an excellent thread pointing out that we should be using HTTPS even on our local machines. Why?

You want your local web development set up to reflect your production reality as much as possible. URL parsing, routing, redirects, avoiding mixed-content warnings, etc. It's very easy to accidentally find oneself on http:// when everything in 2018 should be under https://.

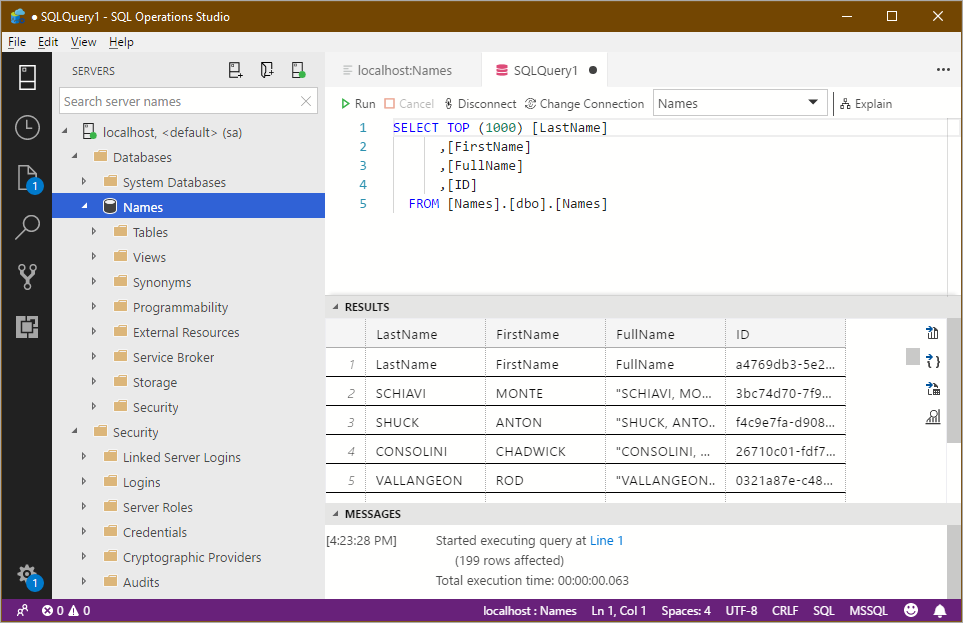

I'm using ASP.NET Core 2.1 which makes local SSL super easy. After installing from http://dot.net I'll "dotnet new razor" in an empty folder to make a quick web app.

Then, when I "dotnet run" I see two URLs serving pages:

C:\Users\scott\Desktop\localsslweb> dotnet run

Hosting environment: Development

Content root path: C:\Users\scott\Desktop\localsslweb

Now listening on: https://localhost:5001

Now listening on: http://localhost:5000

Application started. Press Ctrl+C to shut down.

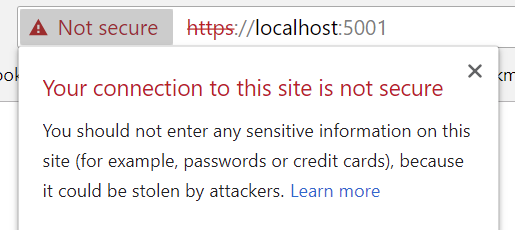

One is HTTP over port 5000 and the other is HTTPS over 5001. However, if I hit https://localhost:5001, I may see an error:

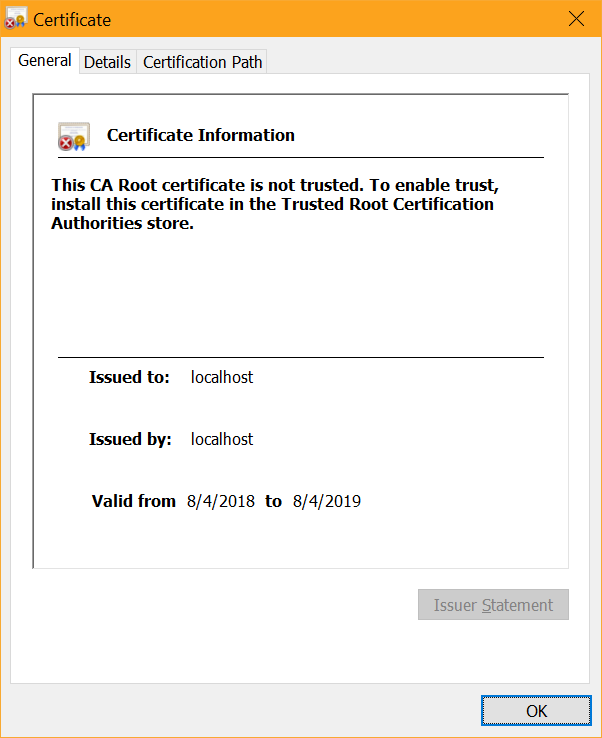

That's because this is an untrusted SSL cert that was generated locally:

There's a dotnet global tool built into .NET Core 2.1 to help with certs at dev time, called "dev-certs."

C:\Users\scott> dotnet dev-certs https --help

Usage: dotnet dev-certs https [options]

Options:

-ep|--export-path Full path to the exported certificate

-p|--password Password to use when exporting the certificate with the private key into a pfx file

-c|--check Check for the existence of the certificate but do not perform any action

--clean Cleans all HTTPS development certificates from the machine.

-t|--trust Trust the certificate on the current platform

-v|--verbose Display more debug information.

-q|--quiet Display warnings and errors only.

-h|--help Show help information

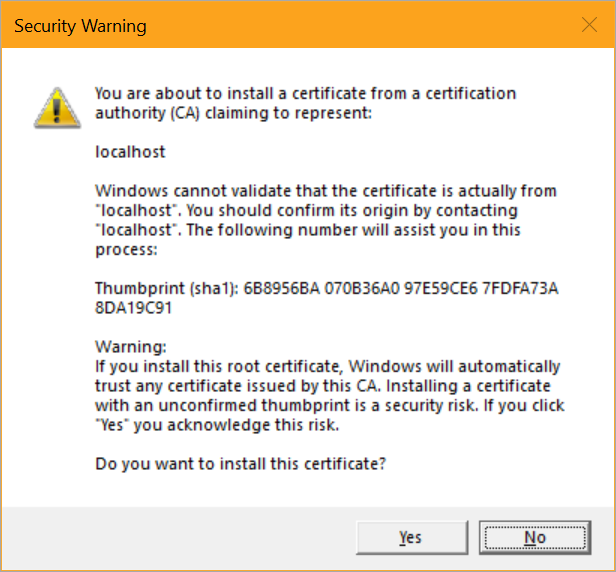

I just need to run "dotnet dev-certs https --trust" and I'll get a pop up asking if I want to trust this localhost cert..

On Windows it'll get added to the certificate store and on Mac it'll get added to the keychain. On Linux there isn't a standard way across distros to trust the certificate, so you'll need to perform the distro specific guidance for trusting the development certificate.

Close your browser and open up again at https://localhost:5001 and you'll see a trusted "Secure" badge in your browser.

Note also that by default HTTPS redirection is included in ASP.NET Core, and in Production it'll use HTTP Strict Transport Security (HSTS) as well, avoiding any initial insecure calls.

public void Configure(IApplicationBuilder app, IHostingEnvironment env)

{

if (env.IsDevelopment())

{

app.UseDeveloperExceptionPage();

}

else

{

app.UseExceptionHandler("/Error");

app.UseHsts();

}

app.UseHttpsRedirection();

app.UseStaticFiles();

app.UseCookiePolicy();

app.UseMvc();

}

That's it. What's historically been a huge hassle for local development is essentially handled for you. Given that Chrome is marking http:// sites as "Not Secure" as of Chrome 68 you'll want to consider making ALL your sites Secure by Default. I wrote up how to get certs for free with Azure and Let's Encrypt.

Sponsor: Preview the latest JetBrains Rider with its built-in spell checking, initial Blazor support, partial C# 7.3 support, enhanced debugger, C# Interactive, and a redesigned Solution Explorer.

About Scott

Scott Hanselman is a former professor, former Chief Architect in finance, now speaker, consultant, father, diabetic, and Microsoft employee. He is a failed stand-up comic, a cornrower, and a book author.

About Newsletter

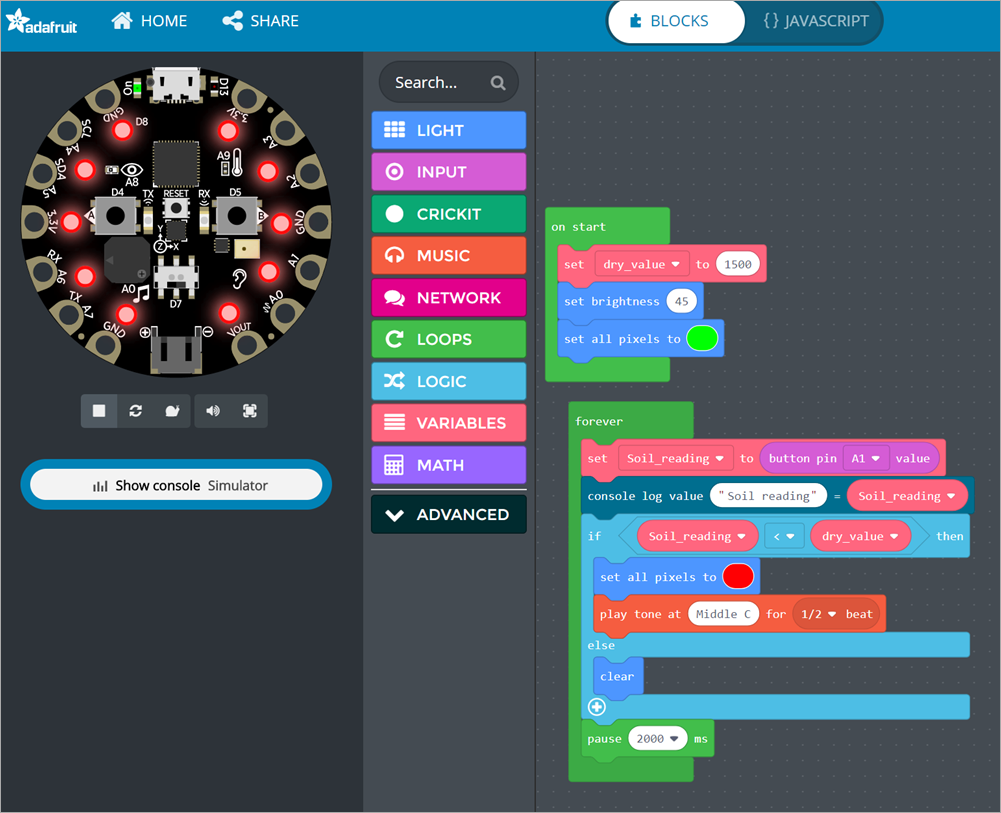

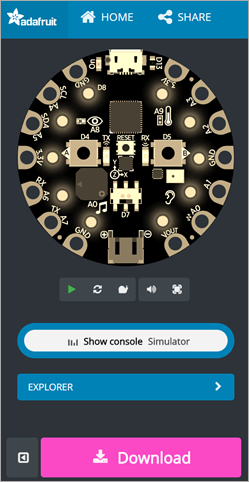

There's a ton of great open source hardware solutions out there. Often they're used to teach kids how to code, but their also fun for adults to learn about hardware!

There's a ton of great open source hardware solutions out there. Often they're used to teach kids how to code, but their also fun for adults to learn about hardware!

I recently met some folks that didn't know that

I recently met some folks that didn't know that

The best way to learn about code isn't just writing more code -

The best way to learn about code isn't just writing more code -

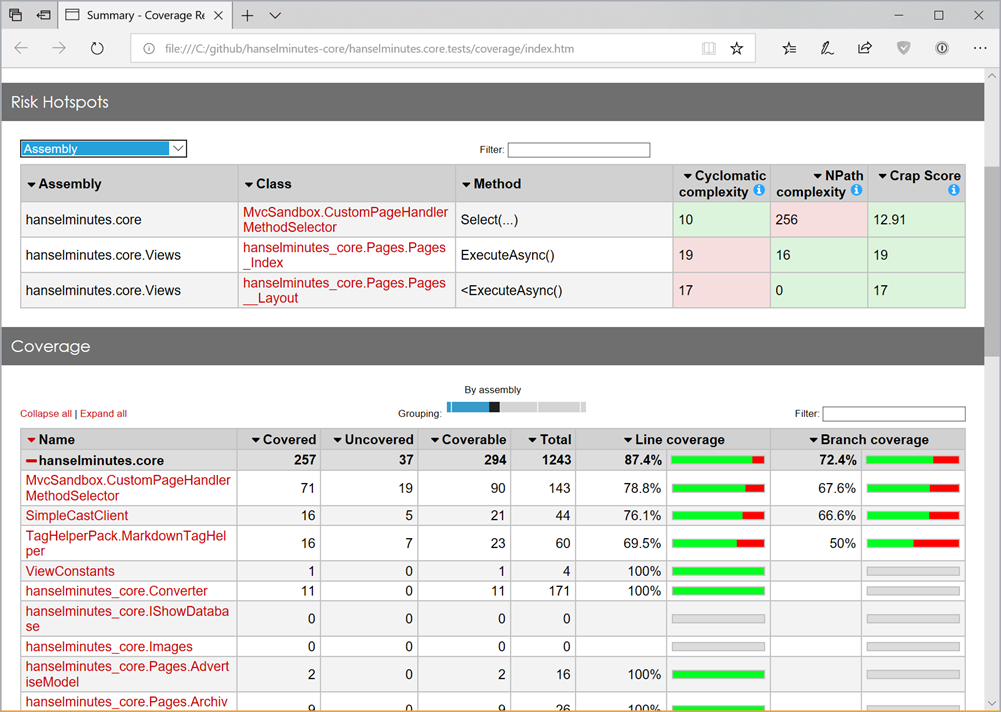

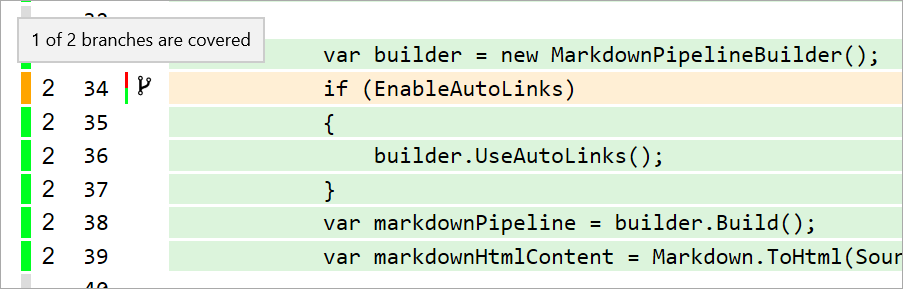

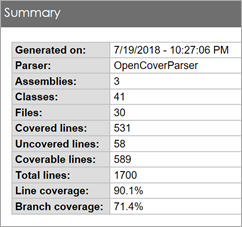

Cool. My tests run as usual, but now I've got a coverage.xml in my test folder. I could also generate LCov or Cobertura reports if I'd like. At this point my coverage.xml is nearly a half-meg! That's a lot of good information, but how do I see the results in a human readable format?

Cool. My tests run as usual, but now I've got a coverage.xml in my test folder. I could also generate LCov or Cobertura reports if I'd like. At this point my coverage.xml is nearly a half-meg! That's a lot of good information, but how do I see the results in a human readable format?